MemOCR: Layout-Aware Visual Memory for Efficient Long-Horizon Reasoning

🌟 Model Description

MemOCR is a visual memory agent that dynamically adapts information density during memory drafting and reading, and optimizes visual layouts to highlight key information.

This checkpoint is fine-tuned from Qwen2.5-VL-7B-Instruct with budget-aware training objectives.

Key Capabilities

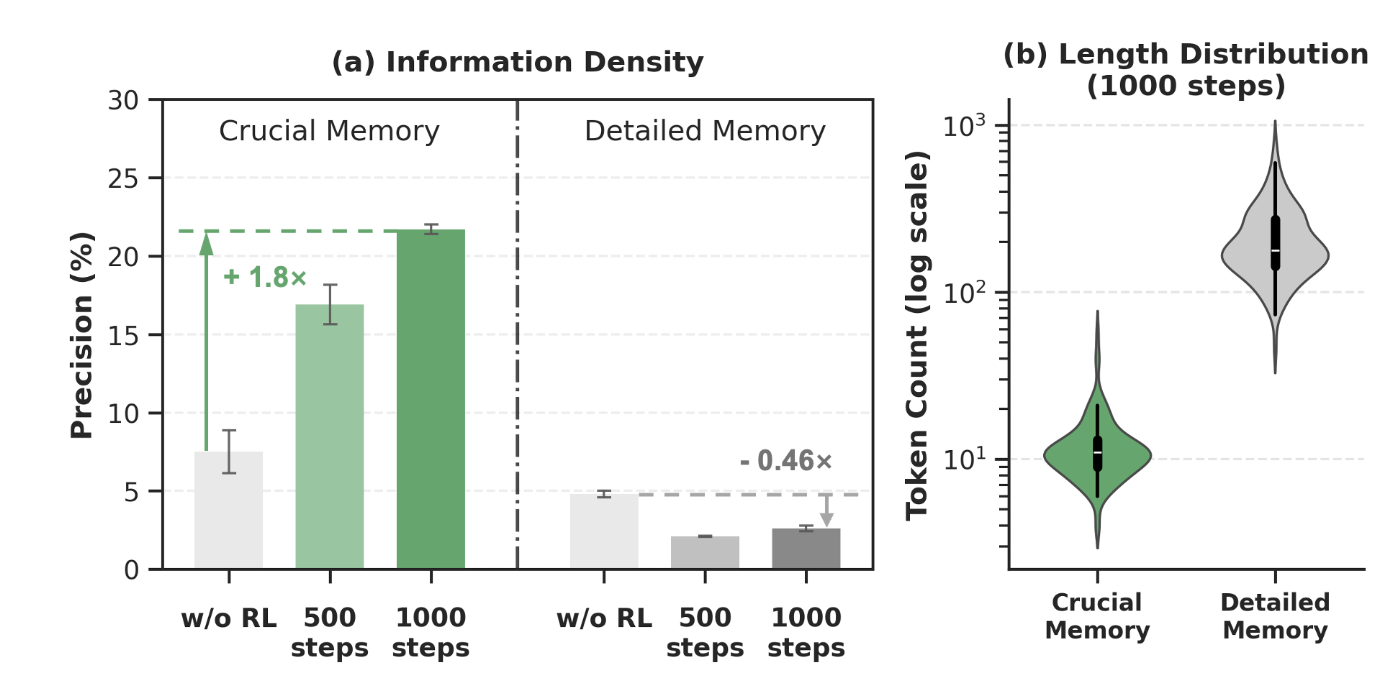

- Adaptive Information Density: Dynamically adjusts memory content richness based on task requirements

- Budget-Aware Memory: Optimizes memory usage with explicit token budget constraints

- Dual-Domain Architecture: Separate memory drafting (text domain) and reading (vision domain) processes

- Multi-Hop Reasoning: Superior performance on complex question answering tasks

🏗️ Architecture

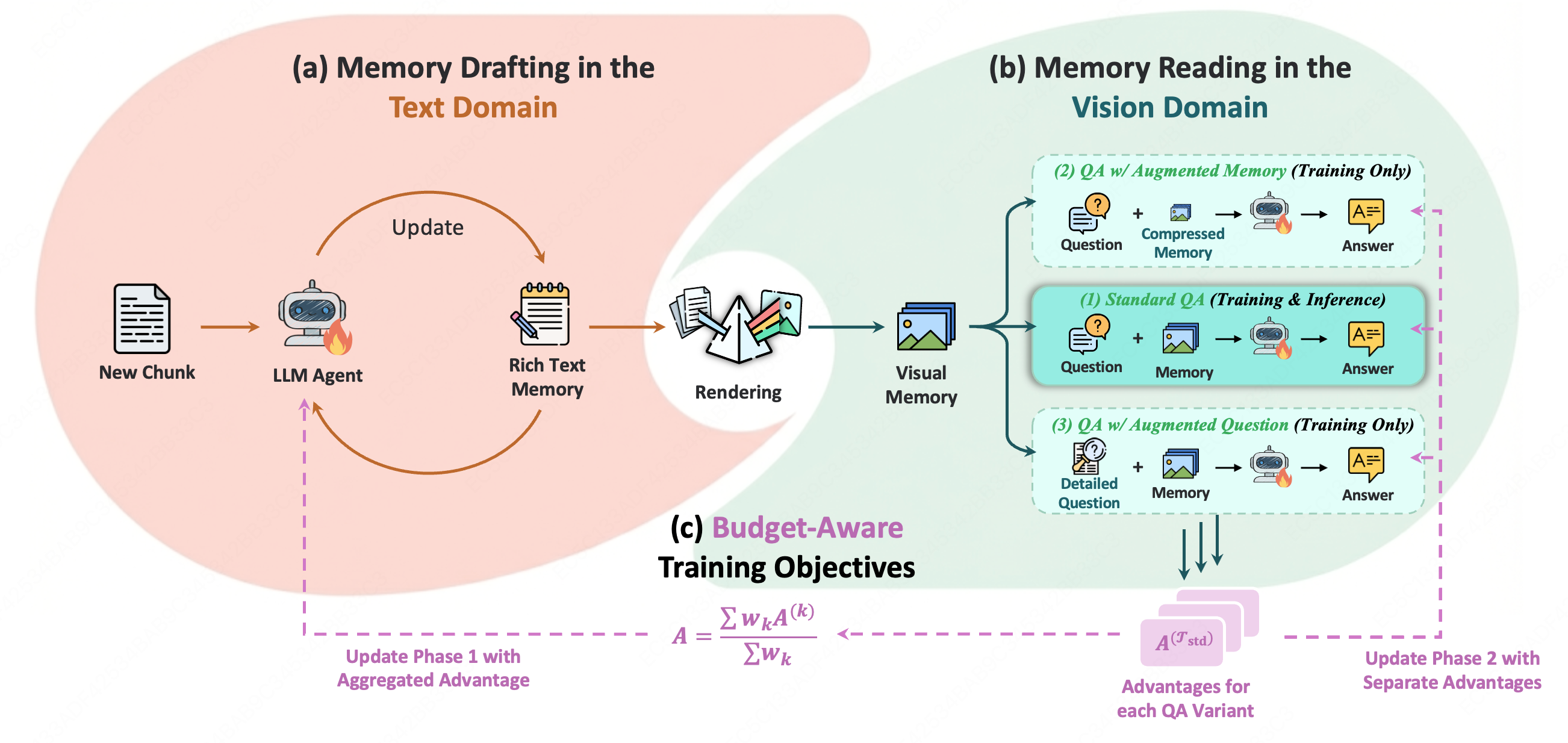

MemOCR consists of two main components:

- Memory Drafting in Text Domain: An LLM agent iteratively refines rich-text memory content based on question-answering feedback

- Memory Reading in Vision Domain: A vision-language model processes rendered visual memory with optimized information density

The framework employs budget-aware training objectives to balance memory informativeness and token efficiency.

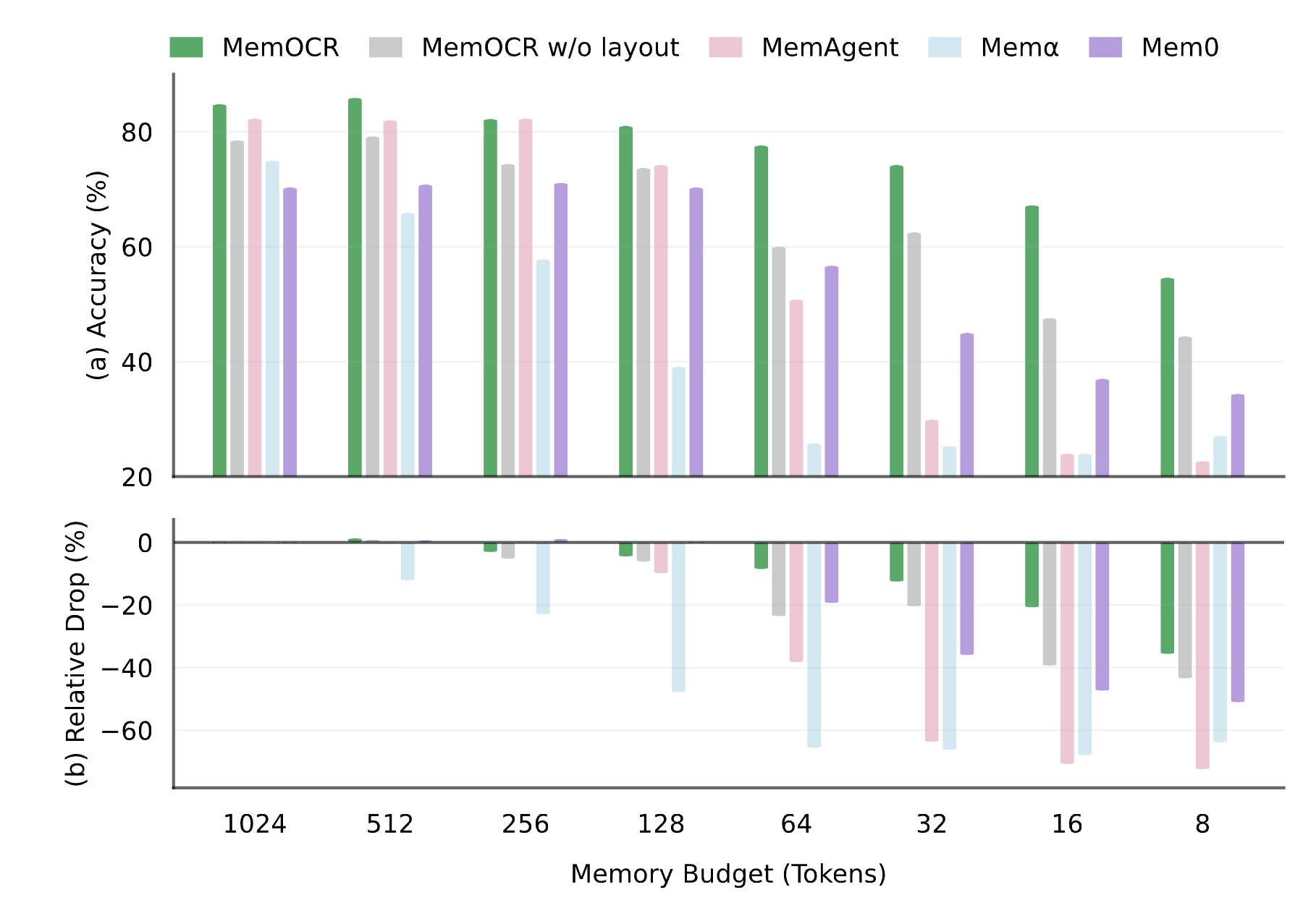

📊 Performance

Main Results

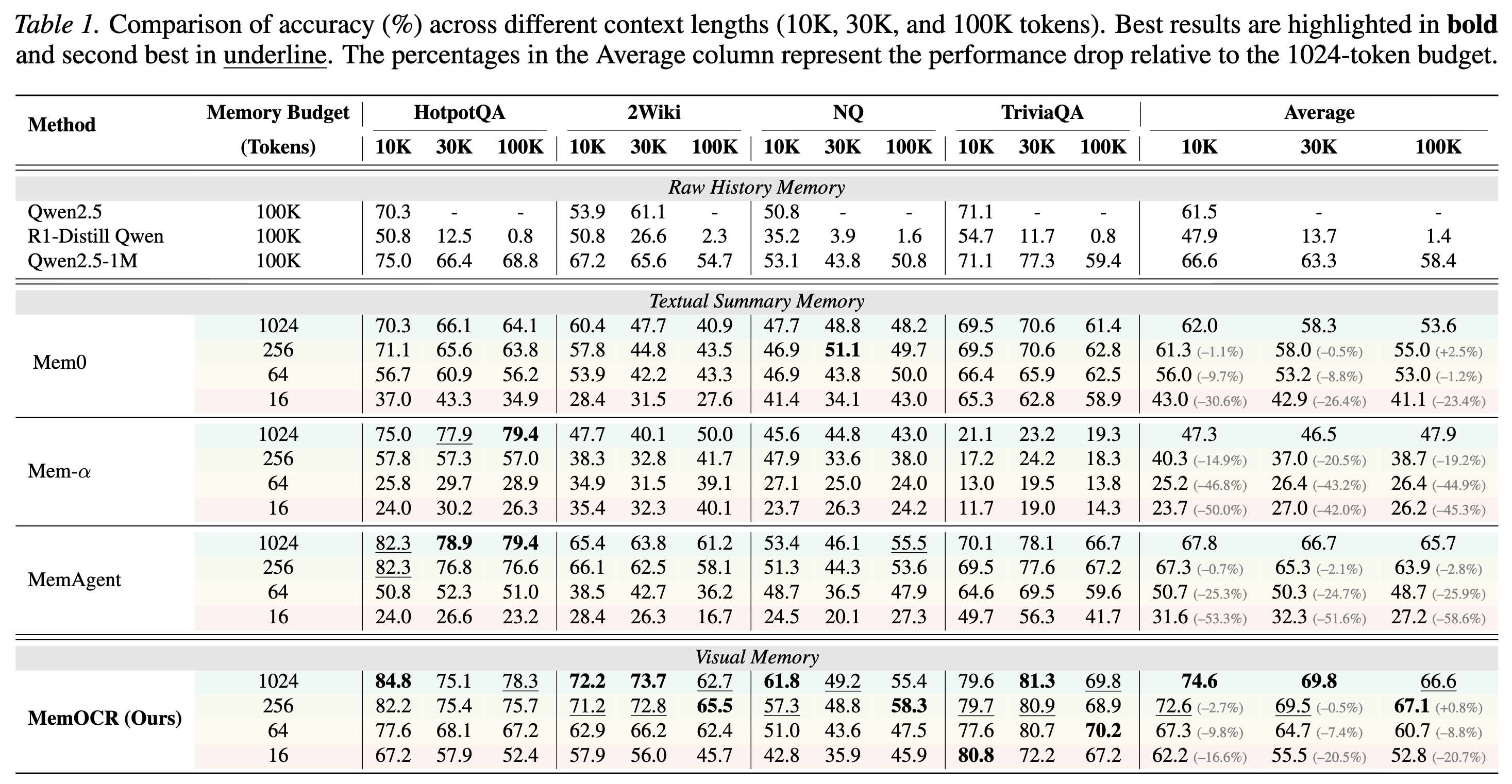

MemOCR achieves state-of-the-art performance across multiple multi-hop QA benchmarks:

- HotpotQA: Superior accuracy with efficient memory budgets

- 2WikiMultihopQA: Strong multi-hop reasoning capabilities

- NaturalQuestions & TriviaQA: Excellent knowledge retrieval performance

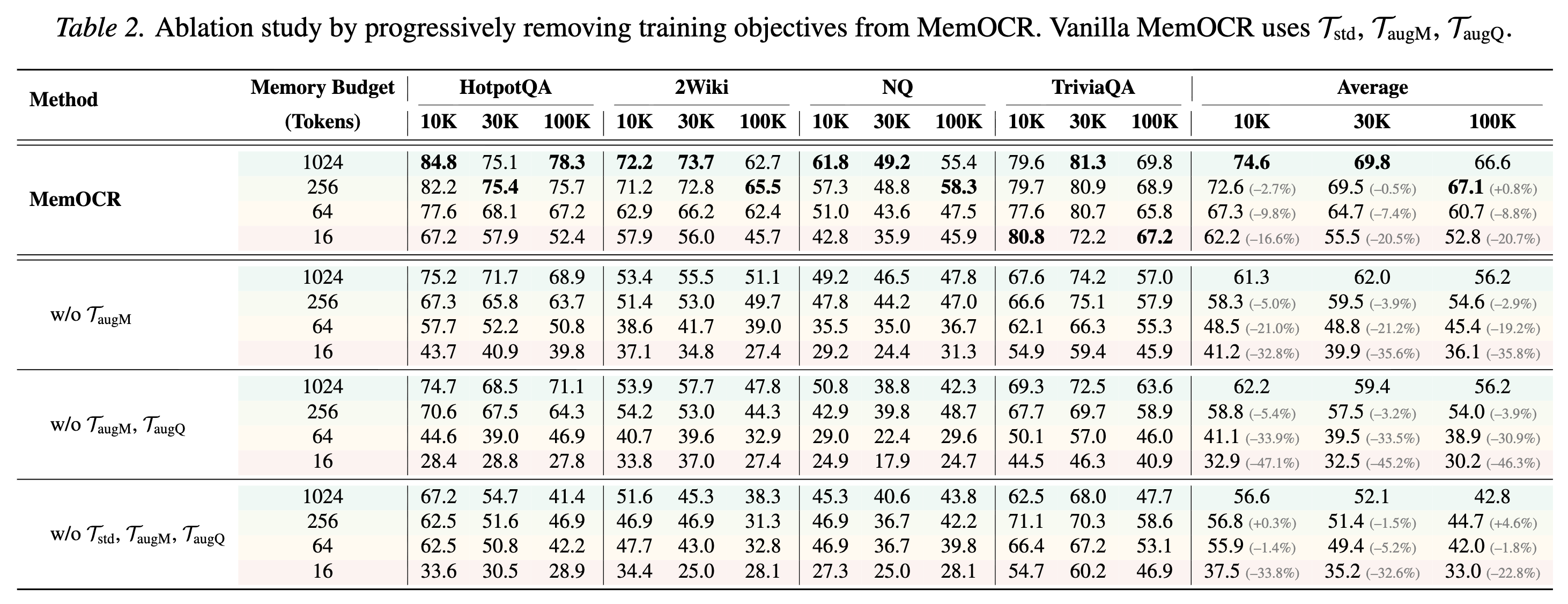

Ablation Studies

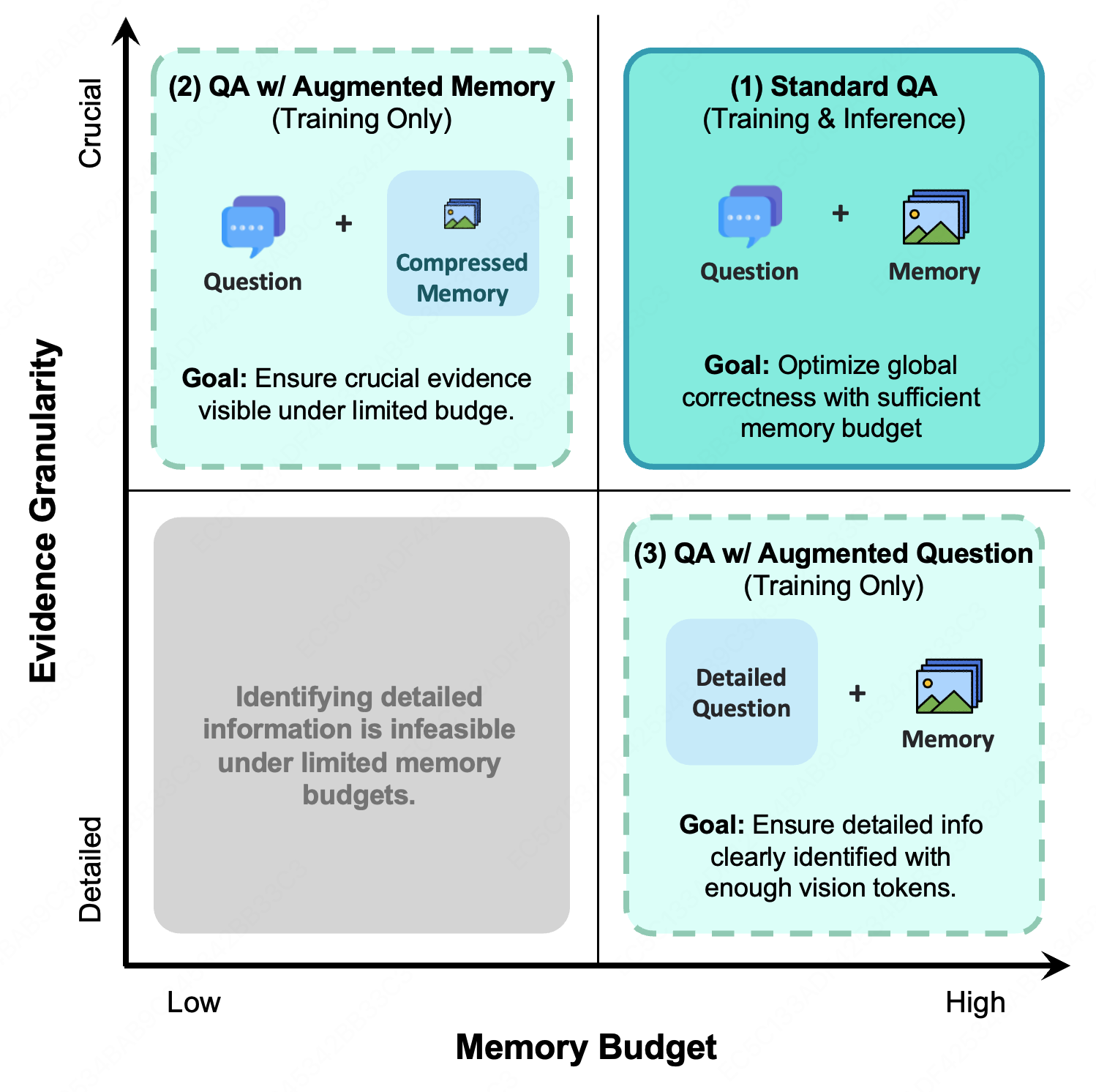

Analysis: Information Density & Budget

🚀 Usage

This model is designed to work with the MemOCR framework. Please refer to the official repository for detailed usage instructions.

📚 Citation

If you find MemOCR useful in your research, please consider citing:

@article{shi2026memocr,

title={MemOCR: Layout-Aware Visual Memory for Efficient Long-Horizon Reasoning},

author={Yaorui Shi and Shugui Liu and Yu Yang and Wenyu Mao and Yuxin Chen and Qi GU and Hui Su and Xunliang Cai and Xiang Wang and An Zhang},

journal={arXiv preprint arXiv:2601.21468},

year={2026},

}

🙏 Acknowledgements

This model is built upon:

- Qwen2.5-VL as the vision-language model backbone

- veRL as the reinforcement learning training framework

- MemAgent for the recurrent module and training dataset

📄 License

This model is licensed under the Apache License 2.0. See the LICENSE file for details.

Dataset License

Training and evaluation datasets are derived from:

- HotpotQA, 2WikiMultihopQA, Natural Questions, TriviaQA: Wikipedia-derived content licensed under CC BY-SA 4.0

- Downloads last month

- 46